Anthropic Scored 220,000 Nvidia GPUs From SpaceX's Data Center

I was debugging some gnarly Python yesterday when Claude Code hit me with the dreaded rate limit. Again. You know that feeling—you're in flow state, the AI is actually being helpful, and boom—"You've reached your usage limit for this hour."

Well, those days might be ending. Anthropic just announced they're doubling Claude Code usage limits across all paid tiers and killing those annoying peak-hour throttles. The reason? They cut a deal with SpaceX for some serious compute power.

The Numbers Are Wild

We're talking about 300 megawatts of immediate compute capacity and access to 220,000 Nvidia GPUs by the end of May. That's not a typo—220,000 GPUs sitting in SpaceX's Colossus 1 facility, ready to power your late-night coding sessions.

But here's where it gets interesting:

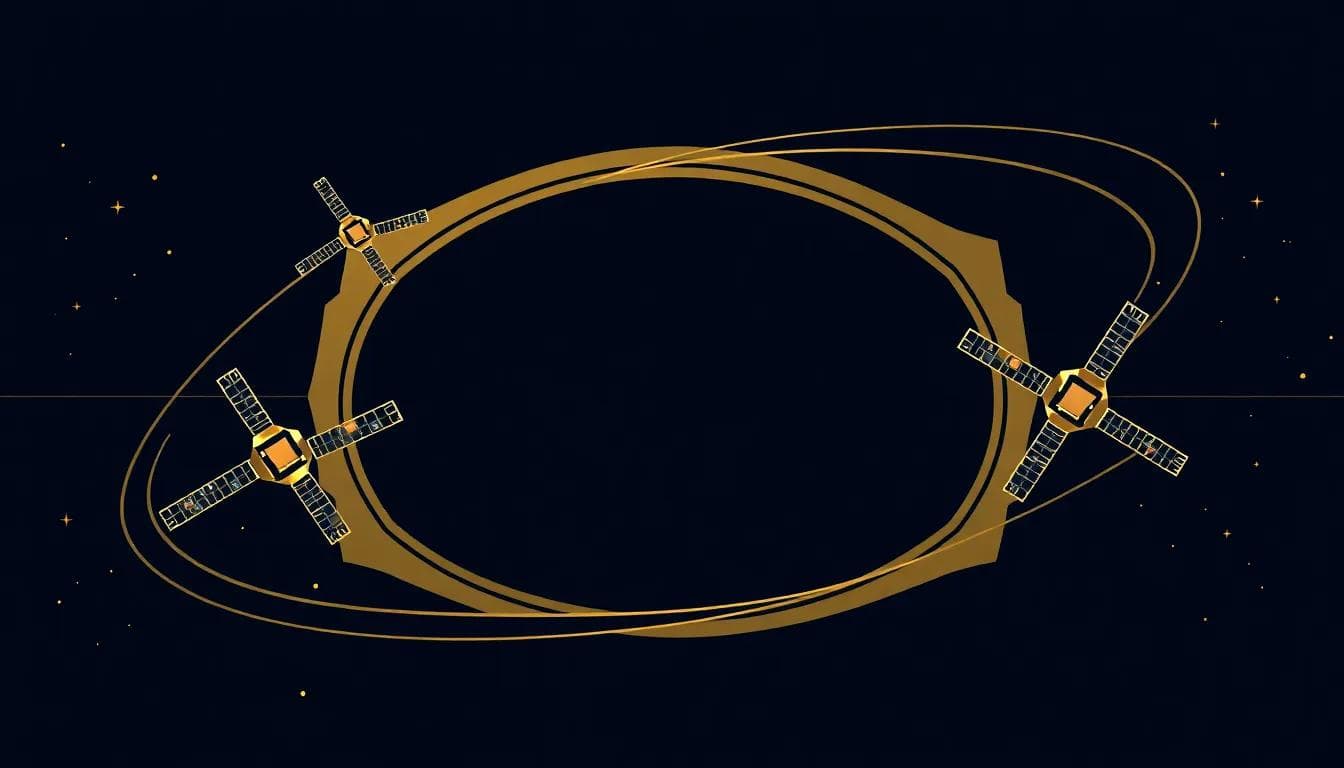

<> Both companies expressed interest in developing "multiple gigawatts of orbital AI compute capacity"/>

Orbital. As in space. As in Elon Musk wants to put data centers in orbit to train AI models. I can't decide if this is brilliant or completely insane.

The Rate Limiting Backstory

Anthropic has been playing compute Jenga for months. They introduced weekly rate limits in August 2025, then added peak-hour limits in March. Now they're removing the peak-hour stuff entirely for Pro and Max users.

This tells me one of two things:

1. Those limits were artificial constraints to manage demand

2. They seriously underestimated how much compute they'd need

Either way, it suggests they were over-promising and under-delivering on capacity. Not a great look when you're trying to steal developers from OpenAI.

Musk's Infrastructure Play

This SpaceX partnership is fascinating for several reasons. First, it positions Anthropic alongside Amazon (they already have a 5 GW deal there) as having serious compute backing. But more importantly, it brings Musk directly into Anthropic's ecosystem.

Remember, Musk also runs xAI. So now he's:

- Providing infrastructure to Anthropic

- Competing with them through xAI

- Potentially planning orbital compute infrastructure

The conflict of interest possibilities are chef's kiss levels of Silicon Valley drama.

What This Actually Means for Developers

The immediate wins:

- No more peak-hour throttling on Claude Code

- Doubled rate limits across Pro, Max, Team, and Enterprise

- Higher API limits for Claude Opus models

- More predictable performance during business hours

I'm skeptical about the orbital compute stuff—that sounds like Musk being Musk. But the immediate capacity boost? That's real and it's happening now.

The timing is interesting too. This feels like a direct response to OpenAI's recent moves. Anthropic is essentially saying "we have the compute to compete now" after months of artificial scarcity.

The Bigger Infrastructure War

What's really happening here is that compute capacity has become the primary competitive moat in AI. Not just model architecture or training techniques—raw access to GPUs.

Anthropic now has:

- Amazon: Up to 5 GW capacity

- SpaceX: 300 MW immediately, more planned

- Potentially orbital infrastructure (lol)

That's a serious war chest. The question is whether they can convert that compute into market share against OpenAI's entrenched position.

My Bet: The orbital compute stuff is pure marketing theater, but this partnership gives Anthropic the infrastructure backbone to seriously compete with OpenAI on developer experience. The rate limit increases will drive adoption, especially among enterprise developers who've been burned by throttling during critical work. Within six months, we'll see Claude Code eating into Codex's market share—assuming Anthropic doesn't stumble on execution.